Top Key Player of AI-Driven Robots

ABB

With annual revenue in the U.S. robotics market expected to grow at 5.62% (CAGR), resulting in a market volume of over $9 billion by 2027, John Bubnikovich, ABB US Robotics Division President, is making several predictions on key trends in robotics automation in the US for 2023 and beyond.

In a survey of 1,610 companies carried out by ABB Robotics in 2022, 70% of US businesses said they are planning to re- or near-shore their operations, with 62% indicating they would be investing in robotic automation in the next three years.

ASEA employs 71,000 people and reported revenues of $6.8 billion and income after financial items of $370 million.

News

- Demand for robots will be particularly strong in countries where companies are planning to re- or near-shore their operations to help improve their supply chain stability in the face of global uncertainty. In a survey of 1,610 companies carried out by ABB Robotics in 2022, 70% of US businesses said they are planning to re- or near-shore their operations, with 62% indicating they would be investing in robotic automation in the next three years.

- Using AI-enabled 3D vision to perform location and mapping functions, Visual SLAM enables AMRs to make intelligent navigation decisions based on their surroundings. Solutions like these enable manufacturers to move away from traditional production lines towards integrated scalable, modular production cells while optimizing the delivery of components across facilities. "Over the past few years, the capabilities of Artificial Intelligence in the robotics realm have improved significantly," said Bubnikovich.

- The market for mobile robots is expected to grow at 20 percent CAGR through 2026, from $5.5bn to $9.5bn and ABB's AI-powered 3D vision technology is at the forefront of this growth.

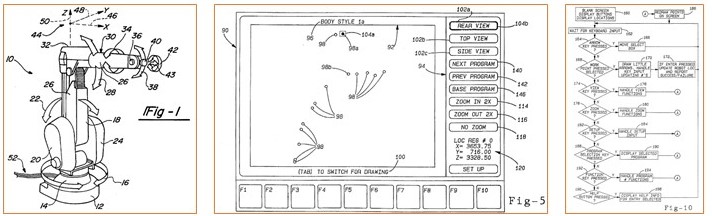

Patent Related to Robot Control System

US5675229A

- Title: Apparatus and method for adjusting robot positioning

- Publication: 1997-10-07

- Coverage: US

- Assignee: Abb Robotics Inc

- Inventor: Thorne Henry

A robot control system for repositioning work points used by the robot control system at which operations occur. The work points are retrieved from the robot controller memory and plotted onto a video display for the operator to view. The operator then designates and selects a work point or work points which are to be repositioned. Using a mouse or other type of cursor control, the operator can then manipulate on the video display the points associated with the work points. After the operator has adjusted the position of the points, in the robot coordinate system, the revised points are then stored in the robot controller memory and used thereafter when positioning the robot.

FIG. 1 depicts the mechanical unit 10 of a robot whose positioning may be modified according to the principles of the present invention. The mechanical unit 10 includes a base 12 which is suitably secured to a foundation (not shown) in order to limit movement. A gear box and motor unit 14 attaches to the base 12 and enables rotational movement in the direction of arrows 16. A lower arm 18 attaches to a gear box and motor unit 20 which controls translational movement in the direction of arrows 22. A third motor unit and gear box 24 attaches opposite the robot lower arm 18 from motor unit and second gear box 20. Third motor unit and gear box 24 controls extension of lower arm 18 in the direction of arrows 28. Lower arm unit 18 connects to upper arm unit 30 via fourth motor unit and gear box 32. Rotational movement of upper arm unit 30 in the direction of arrows 34 is controlled by fourth motor unit and gear box 32. Upper arm unit 30 also connects to wrist 36 which is positionable in a swivel movement in the direction of arrows 38 and rotational movement of mounting flange 40 in the direction of arrows 42. Motor unit and gear box 26 controls the swivel movement of wrist 36 in the direction of arrows 38 and rotational movement in the direction of arrows 42. A work tool 43 mounts to mounting flange 40. Work tool 43 varies in accordance with the particular operation to be effected by the robot. By way of example, the work tool may deburr, grind, polish, paint, cut, weld, or perform other operations.

Read Also: AI-driven Robots Evolution, Innovation & Beyond

Hanson Robotics

Hanson Robotics' most advanced human-like robot, Sophia, personifies our dreams for the future of AI. As a unique combination of science, engineering, and artistry, Sophia is simultaneously a human-crafted science fiction character depicting the future of AI and robotics, and a platform for advanced robotics and AI research.

For example, she has been used for research as part of the Loving AI project, which seeks to understand how robots can adapt to users' needs through intra and interpersonal development.

DARPA-funded, advanced locomotion with real-time perception; used for research in robotics and AI, capable of navigating diverse terrains and performing complex movements.

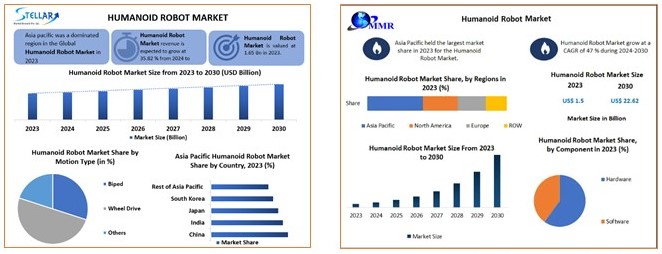

Humanoid robots are professional service robots designed to mimic human motion and interaction, enhancing productivity and reducing costs. Once a futuristic dream, these robots are now becoming commercially viable across various applications. The global humanoid robot market is projected to reach USD 14.07 billion by 2030, growing at a CAGR of 35.82%. The market growth is driven by advancements in robot capabilities and their increasing applicability.

Humanoid robots are used for inspection, maintenance, and disaster response in power plants, performing laborious and dangerous tasks. In space travel, they handle routine duties for astronauts. They also offer companionship for the elderly and sick, act as receptionists, and potentially host human transplant organs. As technology progresses, the range of tasks these robots automate from dangerous rescues to compassionate care continues to grow, driving market growth.

Patent Related to Humanoid Robots

US7113848B2

Title: Human emulation robot system

Publication: 2006-09-26

Coverage: US,WO

Assignee: Hanson Robitics Inc.

Inventor: Hanson David

One aspect is a robot system comprising a flexible artificial skin operable to be mechanically flexed under the control of a computational system. The system comprises a first set of software instructions operable to receive and process input images to determine that at least one human likely is present. The system comprises a second set of software instructions operable to determine a response to a perceived human presence, whereby the computational system shall output signals corresponding to the response, such that, in at least some instances, the output signals cause the controlled flexing of the artificial skin.

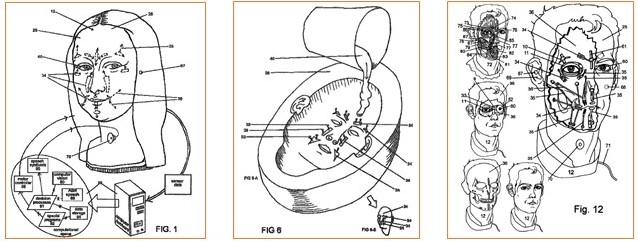

FIG. 1 illustrates one embodiment of a Human Emulation Robot system, including an HED 12, and an electronic control system 13 that governs the operation of various mechanisms in order to emulate at least some verbal and nonverbal human communications. HED may include video sensors 60, audio sensors 67, skin 25, anchors 34, linkages 35, and an audio transducer 70. Data may be sent from the HED sensors to a computer by any suitable communications medium, including without limitation a wireless link, while control signals for speech and motor control may be brought into the embodiment by any suitable communications medium, including without limitation a wireless link. The same or separate communication link(s) could be used for both inputs and outputs and multiple communication links could be used without departing from the scope of the invention. Expressive functions of the face may be achieved using anchors 34, linkages 35 and actuators 33, organized to emulate at least some natural muscle effect. Sensor data may be relayed into a computational system 88, which in the figure comprises a computer and various software, but could exist within microcontroller(s), a computer network, or any other computational hardware and/or software. The functions performed by computational system 88 could also be performed in whole or in part by special purpose hardware. Although the computational system 88 is portrayed in FIG. 1 as existing externally to the HED, alternatively the computational system 88 may be partially or entirely enclosed within the HED without departing from the scope of the invention. Automatic Speech Recognition (ASR) 89, may process audio data to detect speech and extracts words and low-level linguistic meaning. Computer Vision 90 may perform any of various visual perception tasks using the video data, such as, for example, the detection of human emotion.

Boston Dynamics

Boston Dynamics is the global leader in developing and deploying highly mobile robots capable of tackling industry's toughest challenges.

The Spot autonomous four-legged robot from Boston Dynamics now offers on-board artificial intelligence software to process data and draw insights out of the environment while keeping human operators out of hazardous environments.

Spot can maneuver unknown, unstructured, or antagonistic environments and can collect various types of data, such as visible images, 3D laser scans, or thermal images," says Michael Perry, Vice President of Business Development at Boston Dynamics.

- 1992: Marc Raibert spins off from the MIT Leg Lab to start Boston Dynamics, joined soon after by Robert Playter.

- 2013: The first generation Atlas makes its debut, demonstrating full body balance and agility.

- 2020: Spot becomes commercially available, first to early adopters and then to other industrial customers.

- 2021: Stretch is unveiled as our first-purpose built robot, tackling a specific set of warehousing challenges.

"This journey will start with Hyundai—in addition to investing in us, the Hyundai team is building the next generation of automotive manufacturing capabilities, and it will serve as a perfect testing ground for new Atlas applications," the blogpost continues.

Patent Related to Mobility Mapping

US11416003B2

- Title: Constrained mobility mapping

- Publication: 2022-08-16

- Coverage: US, WO, KR, JP, EP, CN

- Assignee: Boston Dynamics Inc

- Inventors: Whitman Eric, Fay Gina Christine, Khripin Alex, Bajracharya Max, Malchano Matthew, Komoroski Adam, Stathis Christopher

A method of constrained mobility mapping includes receiving from at least one sensor of a robot at least one original set of sensor data and a current set of sensor data. Here, each of the at least one original set of sensor data and the current set of sensor data corresponds to an environment about the robot. The method further includes generating a voxel map including a plurality of voxels based on the at least one original set of sensor data. The plurality of voxels includes at least one ground voxel and at least one obstacle voxel. The method also includes generating a spherical depth map based on the current set of sensor data and determining that a change has occurred to an obstacle represented by the voxel map based on a comparison between the voxel map and the spherical depth map. The method additional includes updating the voxel map to reflect the change to the obstacle.

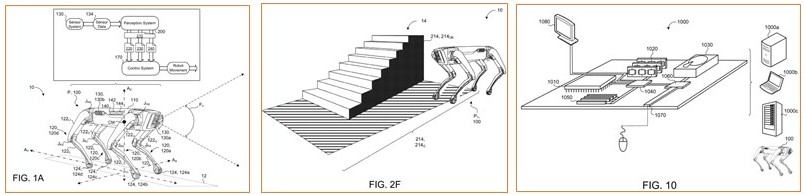

In some implementations, as shown in FIGS. 1A and 1B, the robot 100 includes a control system 170 and a perception system 200. The perception system 200 is configured to receive the sensor data 134 from the sensor system 130 and to process the sensor data 134 into maps 210, 220, 230, 240. With the maps 210, 220, 230, 240 generated by the perception system 200, the perception system 200 may communicate the maps 210, 220, 230, 240 to the control system 170 in order perform controlled actions for the robot 100, such as moving the robot 100 about the environment 10. In some examples, by having the perception system 200 separate from, yet in communication with the control system 170, processing for the control system 170 may focus on controlling the robot 100 while the processing for the perception system 200 focuses on interpreting the sensor data 134 gathered by the sensor system 130. For instance, these systems 200, 170 execute their processing in parallel to ensure accurate, fluid movement of the robot 100 in an environment 10.

In some examples, a classification by the perception system 200 is context dependent. In other words, as shown in FIGS. 2F and 2G, an object 14, such as a staircase, may be an obstacle for the robot 100 when the robot 100 is at a first pose P, P1 relative to the obstacle. Yet at another pose P, for example as shown in FIG. 2G, a second pose P2 in front of the staircase, the object 14 is not an obstacle for the robot 100, but rather should be considered ground that the robot 100 may traverse. Therefore, when classifying a segment 214, the perception system 200 accounts for the position and/or pose P of the robot 100 with respect to an object 14.

Read Also: Market Insights AI-Driven Robots

Starship

Starship Technologies is using autonomous delivery robots to revolutionise local delivery. Delivering items such as groceries, food takeaways and packages, Starship now operates in over 60 locations across the world. Launched in 2014 by Skype co-founders, Ahti Heinla and Janus Friis, we now have delivery robots completing tens of thousands of autonomous deliveries every day.

By substituting vehicle journeys, PDDs have to-date cumulatively saved more than 400 tons of CO2 emissions from entering the atmosphere, which is equivalent to almost 1.7 million miles by car in the UK. Looking ahead, and at a larger scale, these estimates are corroborated by similar findings positing a CO2 reduction of 407 million short tons in the US between 2025 and 2035 with the adoption of autonomous delivery robots in general, equivalent to 88 million passenger vehicles off the road for one year.22 NOx savings would come in at 236,000 tons.

We estimate that if PDDs achieve 2.6% online grocery delivery penetration, it would result in £340 million of private sector investment between 2023 and 2030, equating to average annual investment of nearly £50 million in PDD related infrastructure and support. If PDD share doubles to 5.0%, total required investment would stand at £655 million.

Starship Technologies, the company making science fiction a reality by putting delivery robots on streets in the US and Europe, is announcing it has raised $90 million, co-led by Plural and Iconical.

Patent Related to Self-delivering Robots

US9741010B1

Title: System and method for securely delivering packages to different delivery recipients with a single vehicle

Publication: 2017-08-22

Coverage: US

Assignee: Starship Tech Oü

Inventor: Heinla Ahti

A delivery system and method for delivering packages to multiple recipients uses a mobile robot having a delivery package space suitable for accommodating at least two packages, at least one package sensor configured to output first data reflective of the presence or absence of packages within with package space, at least one processing component configured to receive and process the package sensor's first data and at least one communication component configured to at least send and receive second data. The mobile robot travels to a first delivery location, permits a first recipient to access the package space, and identifies the first recipient's package to the first recipient. The system and method use data from the package sensor to verify that the first recipient removed only his or her package, if other package(s) are also present.

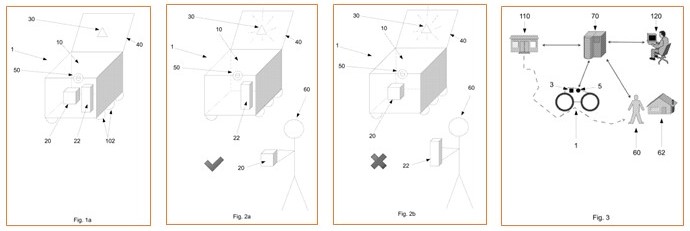

In some other embodiments, the packages 20, 22 can comprise a package ID 32, such as a QR code 32 or a barcode 32 placed directly on the packages 20, 22. This is schematically depicted in FIG. 1d . In such embodiments, the first delivery recipient (not depicted) can, for example, scan the code with a mobile device to confirm that the correct package 20, 22 has been retrieved. The package sensor 30 can also comprise a combination of sensors. For example, a combination of a weight sensor 30 and a package ID 32 can be used. In such embodiments, the first delivery recipient (not pictured) can be asked to scan a code on their package, and the robot can double check that only the package that was scanned was removed via weighing the package space 10 after package retrieval. Additionally or alternatively, a combination of a motion sensor 30 and a package ID 32 can be used. The motion sensor 30 can detect when the first delivery recipient retrieves a package 20, 22 and the first delivery recipient can then be asked to scan the package ID 32 on their mobile device. If the package ID 32 is not correct, the first delivery recipient can return the package 20, 22 to the package space 10 (which can be detected by the motion sensor 30) and then retrieve the correct package 20, 22 and scan it on their mobile device.

The robot 1 can be adapted to communicate with a server 70 via a communication component 5. The server 70 can communicate with a remote terminal 120 and with the first delivery recipient 60. In some embodiments, the robot 1 can also directly communicate with the remote terminal 120 and/or with the first delivery recipient 60 via the robot's communication component 5.

Softbank Robotics

Fumihide Tomizawa began his career at Nippon Telegraph and Telephone Corporation in 1997 before joining SoftBank Group in 2000. He led the robotics project at SoftBank in 2011, which was founded to be the core bearer of SoftBank Group's vision, and was appointed as the President and CEO of SoftBank Robotics Group Corp. in 2014.

A leader in robot solutions, SoftBank Robotics has been contributing to the technology's development since we launched Pepper, our first robot capable of recognizing human emotions, in 2014. There followed an AI autonomous cleaning robot in 2018, a multi-tray delivery robot in 2021, and automated logistics solutions consulting in 2022. We have 21 offices in 10 countries around the world and our robots are used in more than 70 countries worldwide. Our vast, expanding trove of worldwide robot real-world data.

The marvelous technology of our partners worldwide. As a robot integrator, these are the unmatched resources we are leveraging to meet every conceivable need of the developers who want robots to succeed and of the users who are eager to adopt them.

News – Softbank Robotics

Starting with the AI cleaning robot "Whiz" series released in 2019, SBRG launched the food service delivery robot of "Servi" in 2021. Starting in 2022,SBRG also provides the professional indoor facility mobility robots for floor cleaning of "Scrubber 50" from Gaussian Robotics and delivery with the addition of "Keenbot" from Keenon Robotics.

Currently, SBRG deploys its products in more than 70 countries and has offices in 12 locations, and the "Whiz" series has shipped worldwide to approximately 20,000 units as of April 2022.

"Whiz" is an AI cleaning robot, dedicated for floor vacuum cleaning that can run autonomously, mainly for the purpose of cleaning floors such as carpets. Whiz empowers the workforce of the cleaning industry and works together with people as a partner to raise to the maximum efficiency, optimizing the cleaning jobs.

"Servi" is a self-driving food service delivery robot developed for the purpose of working with employees at restaurants, hotels, retail stores, etc. Serving and transporting can be done with simple operations, and employees can devote more time to customer service.

A robot equipped with a maximum of 4 trays and compatible with large-capacity serving and lowering. The low center of gravity and robust housing design realizes stable transportation, and the touch panel makes it easy to operate.

Patent Related to Robots Delivering and Packaging

JP2021026516A

Title: Delivery system, delivery method and delivery program

Publication: 2021-02-22

Coverage: JP

Assignee: Softbank Robotics Corp

Inventor: Yano Satoshi

To provide a delivery system, a delivery method and a delivery program that have user benefits by improving delivery efficiency in product sales. SOLUTION: When a delivery system 1 receives a plurality of orders from the same user 10 within a predetermined time, the delivery system packs and delivers products indicated by the plurality of orders together in a case where a shipping cost when the products are packed and delivered together is cheaper than a shipping cost when the products are individually packed and delivered; and provides cash back 102 to the user who placed the orders.

The delivery unit 135 has a function of arranging to deliver the designated product to the place designated by the user when the user requests the purchase of the product. The delivery unit 135 packs the product and arranges the delivery according to the designation of the combination of the product and the packing material transmitted from the calculation unit 134. According to the arrangement, for example, a robot arm or the like provided in the warehouse 101 packs the product indicated by the product purchase request received by the reception unit 131 into the packing material specified by the specific unit 133, and the packed product is produced. It is handed over to the delivery vehicle that delivers the goods.

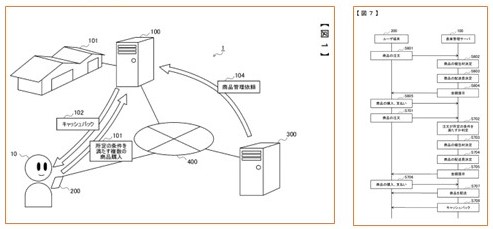

FIG. 1 is a system diagram showing a configuration example of the delivery system 1 according to the present embodiment. As shown in FIG. 1, in the delivery system 1, a seller who sells a product manages and sells the product from his / her own seller terminal 300 to a warehouse management server 100 corresponding to the warehouse 101. Request delivery to the customer. That is, the seller terminal 300 transmits a product management request 104 including information such as product management, that is, management of which product, its quantity, and management period to the warehouse management server 100. In response to this, the warehouse management server 100 manages the product by receiving an appropriate consideration from the seller related to the seller terminal 300. The warehouse management server 100 may receive requests from a plurality of different sellers, or may receive requests from fixed sellers.

About Effectual Services

Effectual Services is an award-winning Intellectual Property (IP) management advisory & consulting firm offering IP intelligence to Fortune 500 companies, law firms, research institutes and universities, and venture capital firms/PE firms, globally. Through research & intelligence we help our clients in taking critical business decisions backed with credible data sources, which in turn helps them achieve their organisational goals, foster innovation and achieve milestones within timelines while optimising costs.

We are one of the largest IP & business intelligence providers, globally serving clients for over a decade now. Our multidisciplinary teams of subject matter experts have deep knowledge of best practices across industries, are adept with benchmarking quality standards and use a combination of human and machine intellect to deliver quality projects. Having a global footprint in over 5 countries helps us to bridge boundaries and work seamlessly across multiple time zones, thus living to the core of our philosophy - Innovation is global, so are we !!!

Solutions Driving Innovation & Intelligence

Enabling Fortune 500's, R&D Giants, Law firms, Universities, Research institutes & SME's Around The Globe Gather Intelligence That

Protects and Nurtures Innovation Through a Team of 250+ Techno Legal Professionals.